Documentation: it is the key to our tools. It has enabled us to evolve from coding into the prominent engineers on the planet. Documentation is slow, normally taking as much, if not more, time than the development itself. But every few hundred commits, development leaps forward – leaving documentation behind.

Professor Xavier, X-Men (if he were a software engineer rather than a mutant geneticist)

There is a war brewing; between those who put their faith in the documentation and those who fear the prophecies the staleness to come.

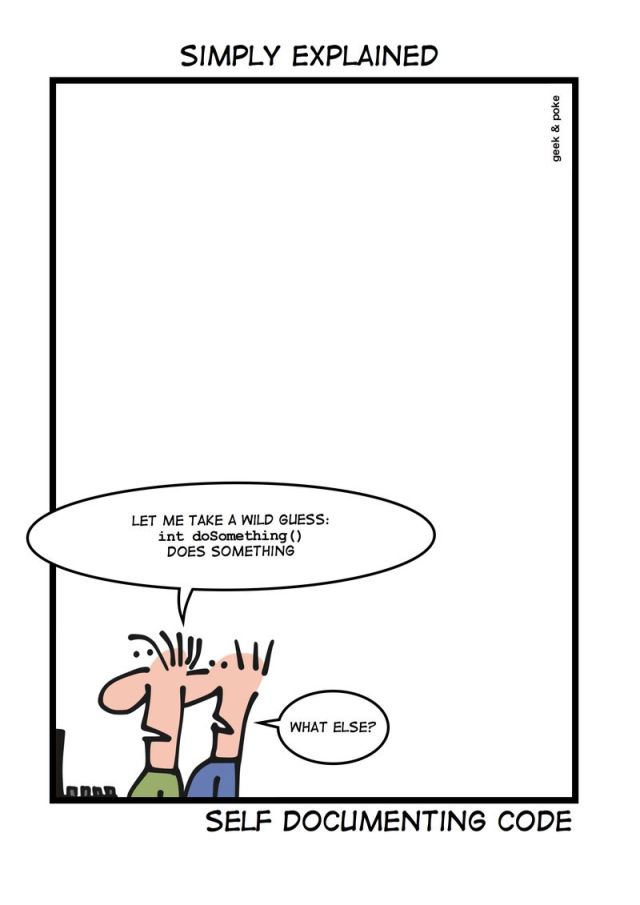

Documentation: Self-Documenting or Well-Documented

Do you think the thorough and comprehensive documentation of your project is enough to understand how to interact with it?

Do you think your code is so clean and intuitive that anyone can interface with it?

Everyone is wrong, and those who value tribal knowledge are toeing the line of true evil – but let’s leave them in the hole that they are digging for themselves. Every project starts with aspirations of perfection and standards of quality; admirable but completely unrealistic and unattainable.

Stale documentation absolutely will happen to your project. There is no way that documentation can be maintained at the same level and speed as the features and bug fixes – deadlines and goals change, small bits slip through the cracks. When it comes to documentation, words are nothing but representations of abstract ideas that no two people perceive in the same manner. No matter how thorough or complete you feel your documentation is, there will be those who do not understand; the more thorough your documentation, the harder it is to maintain.

Code is logical, as well as the currently defined instruction set, making the concept of self-documenting code more appetizing. Yet, code is an art and interpreted by the individual, understood at different levels, and can appear to be absolute magic – and a magician never reveals their secret (hint: it’s a reflection). What is intuitive to me is not intuitive to you, and if we wrote code to be so intuitive and easy to pick up….. well we can’t. Even Blockly and Scratch, which are intended to introduce children to programming concepts, seem alien to many – then when you introduce concepts like Aspect-Oriented Programming and Monkey Patching, you have entered the realm most dare not.

So, yes, the documentation for your library, REST API or plugin will, at some point, fail. End of story.

Pitch the Stale Documentation, Having the Ripe Idea

Credit where credit is due, this concept was not of mine but of my best friend James. He had grown tired of outdated, incorrect, or flat-out misleading API documentation. Most documentation aggregation software collects information from conformingly formatted comments – JavaDoc, API Doc, C# XML – but comments are not meant to be ingested by the application they are describing and are often the first to be forgotten when there is a fire burning. We have accepted that Tests should not be a second-class citizen, Test Driven Development is a proven standard, and we should not accept documentation to be either.

There’s a motto amongst software engineers “never trust your input”; but all this validating, verifying, authenticating… it’s boring, I am lazy and why can’t it just validate itself and let me move on to the fun part?

To focus on the fun part, you have to automate the boring stuff.

Swagger is a specification for documenting REST APIs down to every detail: payload definitions, request specifications, conditional arguments, content-type, required permissions, return codes – everything. The best part? The specification is consumable. Swagger even includes a browseable user interface that describes every facet and even lets you interact with the server visually. Play with a live demo yourself.

In Douglas Adams’ Hitchhiker’s Guide to the Galaxy, the Magrathean’s designed and built a computer to provide the ultimate answer; the answer to Life, the Universe, and Everything. This computer’s name? Deep Thought. An apt name for a library that would handle the answer, as long as you handled the question. Our proof of concept, DeeptThought-Routing, accepted your Swagger JSON specification along with endpoint handlers and a permissions provider as parameters and generated a NodeJS router that would validate paths, payloads, permissions even parsing the request against the Content-Type header and transforming the handler output to the Accepts header MIME type – not to mention attempt to transform or coerce parameters to the specification data types; returning the appropriate HTTP response codes without invoking your handlers. You could trust your inputs, no validation, free to focus on the core of the project – the fun part.

DeepThought: Documentation Driven Development

Schema-First means you define the schema (or specification) for your code, then utilize tooling that generates the code that matches the definitions, constraints, and properties within your schema. Through DeepThought-Routing, we had proven the concept of self-validation. Swagger did the same for self-documenting. Code generation is a core concept to GraphQL and JSON-Schema lists language-specific tooling for code generation.

There’s a pattern to all of this, patterns can be automated. A spark appeared in my mind: “Instead of implicit meaning through validation of inputs and documentation of primitive data types – what if the meaning were explicit, and if the meaning is explicit, they could validate themselves!” That spark became an inferno of chaining the initial “what-if” to “if that, then we could…”. When the fire of inspiration slowly receded to glowing embers, what was left was an idea. An idea that could change how software development is approached, at least, how I would approach development: Documentation Driven Development.

The Goals for Documentation Driven Development:

- Self-Documenting: Clean, human readable documentation generated directly from the schema

- Self-Describing: Meta-datatypes that provide meaning to the underlying data through labels and units

- Self-Validating: Generates data models that enforce the constraints defined in the schema

- Self-Testing: Unit tests are generated to test against constraints in models and data structures

- UseCase-Scaffolding: Generates the signature of the functions that interact with external interfaces, and interface with the domain of your application

- Correctness-Validation: Validates the units/labels of input model parameters can yield the units/labels of the use case output

- Language-Agnostic: Plugin-based system for generation of code and documentation

Obviously, this is a large undertaking and still in its infancy – but I can see the path that will bring Documentation Driven Development from abstract concept to reality.

Example Schema

Here is a simplified example of how to model a submersible craft with meaningful data fields and perform an action on the speed of the craft.

Units

id: fathom

name: Fathoms

description: A length measure usually referring to a depth.

label:

single: fathom

plural: fathoms

symbol: fm

conversion:

unit: meter

conversion: 1.8288Unit Validating Meta-Schema Specification: https://github.com/constructorfleet/Deepthought-Schema/blob/main/meta-schema/unit.meta.yaml

Compound Units

id: knot

name: Knot

description: The velocity of a nautical vessel

symbol: kn

unit: # The below yields the equivalent of meter/second

unit: meter

dividedBy:

unit: second # Example for showing the extensibility of compound units

id: gravitational_constant

name: Gravitational Constant Units

description: The units for G, the gravitational constant

label:

single: meter^3 per (kilogram * second^2)

plural: meters^3 per (kilogram * second^2)

symbol: m^3/(kg*s^2)

unit:

unit: meter

exponent: 3

dividedBy:

group:

unit: kilogram

multipliedBy:

unit: second

exponent: 2Compound Unit Validating Meta-Schema Specification: https://github.com/constructorfleet/Deepthought-Schema/blob/main/meta-schema/compound-units.meta.yaml

Fields

id: depth

name: Depth

description: The depth of the submersible

dataType: number

unit: fathom

constraints:

- minimum: # Explicit unit conversion

value: 0

unit: fathom

- maximum: 100 # Implicit unit conversion

- precision: 0.1 # Implicit unit conversion

id: velocity

type: number

name: Nautical Speed

description: The velocity of the craft moving

unit: knot

constraints:

- minimum: 0

- maximum: 12Field Validating Meta-Schema Specification: https://github.com/constructorfleet/Deepthought-Schema/blob/main/meta-schema/field.meta.yaml

Models

id: submersible

name: Submarine Craft

description: A craft that can move through an aquatic medium

fields:

- depth

- velocityUse Cases

id: adjust_submersible_speed

name: Adjusts the nautical speed of the craft

input:

- submersible

- speed

output:

- submersibleYou Have Questions, I Have a Few Answers

What the heck is a meaningful meta-datatype?

Traditionally, a field’s specification is broad, abstract, or meaningless which leads to writing documentation that attempts to provide meaning to the consumer of the code. We know what an Integer, Float, Byte, String, etc. are – but these are just labels we apply to ways of storing and manipulating data. If the author provides sufficient information regarding the acceptable values, ranges, and edge case – there is still the matter of writing a slew of tests to ensure the fields lie within the specifications. Not to mention, hoping the documentation remains in line with the implementation as teams, features, and requirements change and grow.

While still using the same underlying storage and manipulations considered familiar across the landscape, fields in DeepThought attempt to remove the tediousness, prevent stale documentation, as well as describing what is. Each field specifies a code-friendly reference name, a human-readable meaningful name, description, constraints, coercive manipulations of the underlying data type – plus units if applicable.

What if I don’t want or need units?

That’s perfectly fine! Obviously, you will lose some of the validation that arises from your schema. Not to mention that unit-less scalars and unit-less physical constants are absolutely a thing, along with physical constants.

How can you validate “correctness” of the Use Cases?

When specifying Use Cases, Deepthought has no knowledge of your implementation. Be it a single method, a chain of methods – that is up to you. Specify the input models, and the output model – knowing you can trust that your input is valid, tested, and in the exact form that is expected. Through dimensional analysis, it is possible to determine if the output model’s units are possible given the input models’ units. If dimensionally correct, DeepThought will generate the scaffolded code for you to implement – if incorrect, you will know before you even start coding.

Follow the Progress of DeepThought

I welcome all input – positive, negative, suggestions, complaints. While I am building this for myself, it would bring me great pleasure to see this adopted by others.