Disregard that this post is around 4 months after the build…

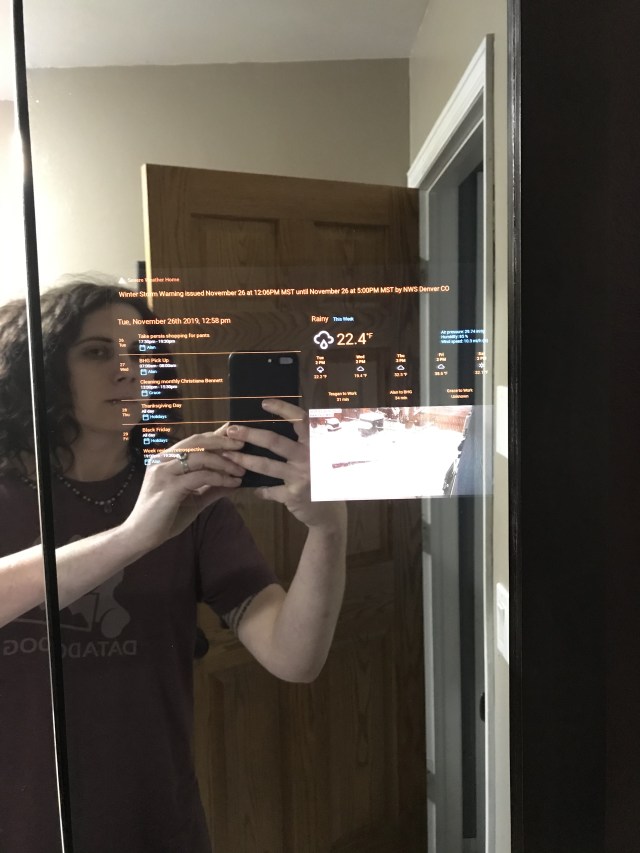

We had just had our bathrooms remodeled and were looking at a medicine cabinet for the upstairs bathroom. Alan and I had both been wanting to build a magic mirror but never had the motivation or a fixture that would work.

We spent weeks trying to find a medicine cabinet we liked and would go with the vanity/countertop and then we saw this one.

The color and style matched the rest of the bathroom and the second shelf was the perfect height for allowing the necessary cables and hardware. Only losing one 5 inch section of the middle shelf seemed a small price to pay to design and build something we’ve been talking about for years.

The Parts

- Raspberry Pi 3 (we had it laying around)

- 10.1″ Monitor with Pi Mount (They changed the design a bit)

- Custom cut 70/30 SmartMirror Acrylic Sheet

- ATG Tape (Tape for framing art)

The Plan

When the medicine cabinet arrived, we evaluated our options to determine which side the monitor would go on, would we reuse the wood backing of the mirror section, how will we deliver power, etc.

We decided the right-hand mirror was a good place to mount the monitor. It was closer to the power outlets, wasn’t too in the way and would be visible to anyone using the sinks. As carefully as we could, we removed the mirror and its wood backing from the medicine cabinet. The answer to “would we reuse the wood backing of the mirror section” was answered for us, as the mirror was glued too well to the mirror and attempting to remove the mirror shattered it.

Alan grew up working in his Dad’s framing shop and is quite skilled at it, in both the technical aspect (mat cutter, frame nailer) but also the subjective aspect (mat color schemes, layers, etc). This is why we have a 5-foot mat cutter on hand and some black foam board, which was sturdy enough to use as a backing and dark enough to allow as much light to be reflected off the mirror as possible.

The Build

Once the acrylic arrived, Alan cut the black foam backing to the size of the wood backing originally attached to the mirror and an exactly sized window where the monitor would be able to sit flush against the mirror. Using ATG tape along the edges to hold both the acrylic and the foam board, it seemed like we were good to go. The monitor was such a tight fit in the window, that we didn’t even bother taping it in for extra support.

We needed to install some outlets inside the medicine cabinet. While we were waiting for the parts and motivation, we realized there were a couple large scratches on the acrylic sheet! That’s what we get for trying to save $30 by getting acrylic instead of glass. So, we took the acrylic, the monitor and the backing down and placed an order for Smart Mirror Glass and waited.

Build #2

Once the glass arrived, we realized that the reflection off of the acrylic was a little distorted compared to the glass – I guess the acrylic just had some surface imperfections. Note: there is a slight blue tone to it compared to the other mirrors, but it is hardly noticeable.

Before mounting the new glass and Pi to the medicine cabinet, I thought it would be a good idea to cut and insert a 2-gang outlet box in the back of the medicine cabinet. We had a couple 2 AC/2 USB outlets laying around, which would server perfectly for charging razors, toothbrushes and running the Magic Mirror. Unfortunately, there was no way to get the Romex (standard in-wall 2/2 or 3/2 electrical wire) to the available outlet without going up into the attic where there’s barely room to move around and itchy fiberglass – not to mention Scooby-Doo has taught me there’s probably Old Man Jenkins up there disguised as a ghoul. So, I ran it as high as I could through the vanity to the wall with the outlet and fished it up and out.

Repeating our previous steps, we attached the Smart Mirror glass and foam board to the door frame using ATG tape and forced the monitor into its little window. Since the monitor had been inserted and removed, it didn’t quite have the same snug fit – for added support we used a rubber cement that wouldn’t eat through the foam board to secure the monitor in place. Our monitor had mounts for a Raspberry Pi as well as a USB power source for it – which means if you turn off the monitor, it will turn off the Pi and Visa-Versa.

Software

We imaged the Raspberry Pi with Raspbian Stretch (primarily due to the fact that I had the image already on my machine). Once we set the OS up appropriately on the Pi, connected to WiFi and set up SSH remote access, we mounted it on the monitor in the medicine cabinet and closed the door.

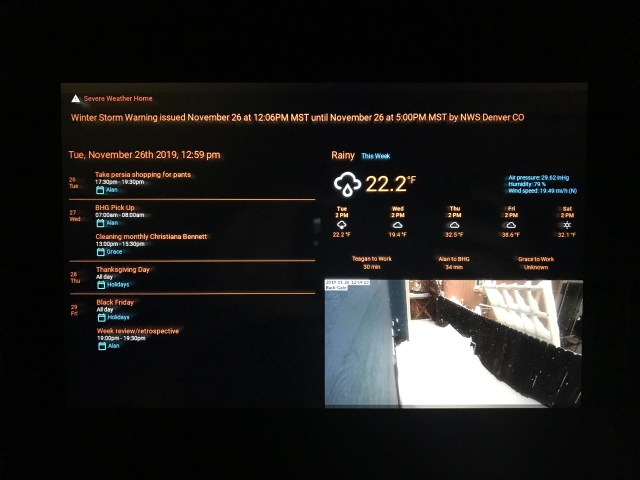

We built a panel specifically for the MagicMirror in Home-Assistant, which removes the tabs/sidebar and other extraneous information with a dark theme set. To access that panel, we needed to install Xorg which gives rise to a graphical user interface, since up until now the system was headless. We used the chromium-browser package because it is simple to use and allows you to open a URL as an app (removing address bar, border, etc.).

We made a special user to run the interface, keeping user roles and purpose separate, aptly named mirror. In the home folder for mirror we created .xsession (this file defines what happens when the X server starts).

#!/bin/sh

#Turn off Power saver and Screen Blanking

xset s off -dpms

#Execute window manager for full screen

exec matchbox-window-manager -use_titlebar no &

#Execute Browser with options

chromium-browser --disk-cache-dir=/dev/null --disk-cache-size=1 -app=http://$HA_URL:$HA_PORT/lovelace/mirror?kioskTo make sure this all happens automatically, create a systemd script, we chose to place our’s /etc/systemd/system/information-display.service:

[Unit]

Description=Xserver and Chromium

After=network-online.target nodm.service

Requires=network-online.target nodm.service

Before=multi-user.target

DefaultDependencies=no

[Service]

User=mirror

# Yes, I want to delete the profile so that a new one gets created every time the service starts.

ExecStart=/usr/bin/startx -- -nocursor

Restart=always

RestartSec=10

[Install]

WantedBy=multi-user.targetDon’t forget to enable your service with systemctl enable information-display.service and start it systemctl start information-display.service

Coming Soon

Adding voice control to the mirror, i.e. “Where is Alan” or “Activate Night Mode”